Some Notes About How I Write Haskell

In many of the above cases, what I am doing is deliberately opting out of abstractions in favor of concrete knowledge of what my program is actually doing. Abstractions can enable powerful behavior, but abstractions can also obscure the simple features of what a program is doing, and all else being equal, I’d rather communicate to a reader, “I am appending two lists”, instead of the more generic, “I am combining two values of a type that implements

Monoid”.

External dependencies in general have a cost: it’s not a huge one, but a cost nonetheless, and I think it’s worth weighing that cost against the benefit pretty actively.

A minor cost — one that matters, but not all that much — is total source size. Haskell programs tend to pull in a lot of dependencies, which means compiling all of them can take a very long time and produce a lot of intermediate stuff, even if a comparatively small amount makes it in to the final program. This isn’t a dealbreaker — if I need something, I need it! — but it’s something to be cognizant of. If my from-scratch build time triples because I imported a single helper function, then maybe that dependency isn’t pulling its weight.

A bigger cost — in my mind, anyway — is breakage and control. It’s possible (even likely) my code has a bug, and then I can fix it, run my tests, push it. It’s also possible that an external dependency has a bug: fixing this is much more difficult. I would have to find the project and either avail myself of its maintainer (who might be busy or overworked or distracted) or figure it out myself, learn the ins and outs of that project, its abstractions, its style, its contribution structure, put in a PR or discuss it on an email list, and so forth. And I can freeze dependency versions, sure, but the world goes on without me: APIs break, bugs get introduced, functionality changes, all of which is a minor but present liability.

It’s not a dealbreaker, but it’s a cost, one that I weigh against the value I’m getting from the library. Should I depend on a web server library for my dynamic site? Well, I’m not gonna write a whole web server from scratch, and if I did, it’d take forever and be worse than an existing one, so the benefits far outweigh the costs. But on the other hand, if I’m pulling in a few shallow helper functions, I might consider replicating their functionality inline instead. There’s always a cost: is it worth the weight of the dependency?

A sheep in wolves clothing

Now the specific context of that quote related to the way in which the Agile movement in software development has become corrupted by an over constrained, over constructuted approach which gives IT directors the comfort of saying they have changed without really having to do so. It also plays to the desire of said executives to announce major, costly initiatives that will only exepcted to deliver after that executive has moved on leaving some other poor sod to pick up the pieces. That has been a depressingly common characteristics of most adoptions of new methods so its not suprise that the SAFe founders played to that weakness in purchasing executives.

The curse of “high-impact medium-generality” optimizations

We can rate software optimizations as low/medium/high in terms of their impact and their generality.

Impact is how much difference the optimization makes when it works; generality is how broadly applicable the optimization is across different situations. Thinking about these factors explicitly can be helpful for predicting how beneficial (or harmful!) an optimization will be in practice.

That’s putting it mildly but this blog entry is really a rant: I hate high-impact medium-generality optimizations.

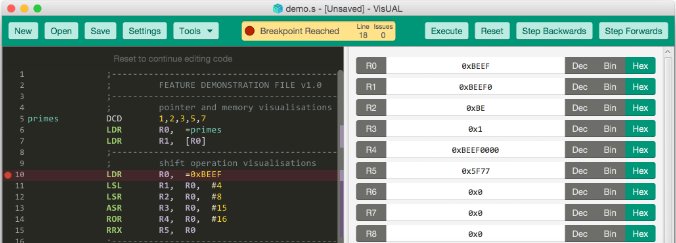

VisUAL - A highly visual ARM emulator

VisUAL has been developed as a cross-platform tool to make learning ARM Assembly language easier. In addition to emulating a subset of the ARM UAL instruction set, it provides visualisations of key concepts unique to assembly language programming and therefore helps make programming ARM assembly more accessible.

It has been designed specifically to use as a teaching tool for the Introduction to Computer Architecture course taught at the Department of Electrical and Electronic Engineering of Imperial College London.

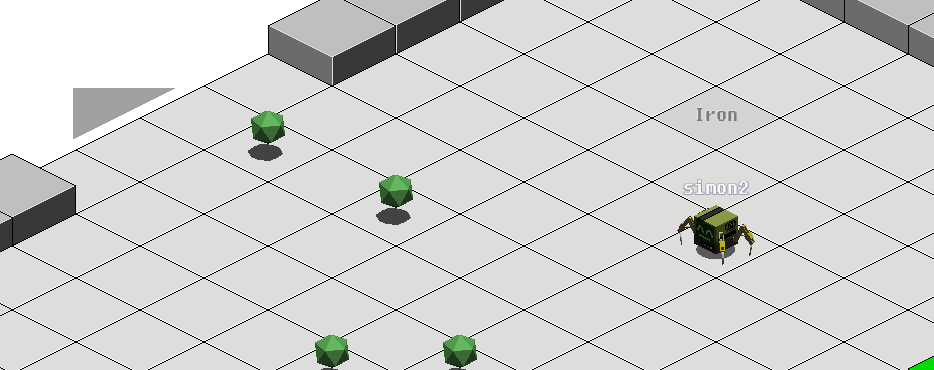

Much Assembly Required

Program the 8086-like microprocessor of a robot in a grid-based multiplayer world.

The tyranny of big suite enterprise software best practices

Best practice pronouncements are used to authenticate and credentialize software solutions, irrespective of whether they match the disrupted real world experience of companies in various marketplaces. By definition, best practices declare there are no other processes that are more effective, or they would be better. So say the BSSC enterprises claiming best practices in their implementation.

This belies a deeper, often unspoken truth: even when technology executives know bloated, BSSC may not be best for their business — especially in times of accelerating market place disruption — a lack of familiarity of emergent digital practices by the board and business executives clouds and constrains their judgement. But then, no one gets fired for hiring major analysts to advise, nor for implementing household BSSC names. Companies have great impediments to change.